Public Cloud – Flexible Engine

Data Pipeline Service – automatize data transport and transformation

Automatize the transport and transformation of data between different departments

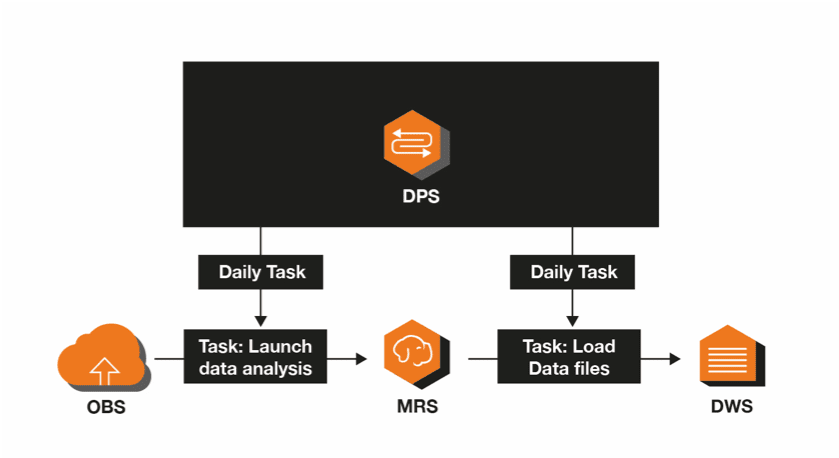

Data Pipeline Service (DPS) is a web service operating on the Public Cloud. It allows you to easily automatize the transport and transformation of data between different departments.

With DPS, you can define a “pipeline” to describe the data processing tasks, the task execution sequence and the task planning plan. DPS plans and controls the execution of tasks according to the predefined scheduling plan and to the relationship, in order to ensure the processing and movement of interdepartmental data.

Benefits

Ease-of-use

To create or customize a pipeline, you can drag and drop activities and data sources onto Canvas (a graphical pipeline editor using drag and drop) and define the properties of these activities and data sources. This will significantly reduce your development costs.

High reliability

Thanks to its highly available design and failure tolerance, DPS can efficiently and reliably plan the exploitation of pipelines and activities. If the logic of an activity is deficient, DPS will automatically attempt to restart that activity. If the defect persists, DPS will send you a defect notification via the Simple Message Notification service.

Interconnection with multiple services

DPS provides a variety of data sources and pre-assembled activities, and interconnects with multiple public services for data storage, movement and analysis in the cloud. This improves the usability of Public Cloud services.